Lessons from AI on Community Development: A Baha’i View

I have recently spent a great deal of time studying and using machine learning in my research. One of the most interesting aspects of this work has been learning about the evolution of neural networks, the scientific and mathematical structures that now underlie much of modern artificial intelligence. Reflecting on these developments, I have begun to wonder whether they may also offer useful insights into how institutions and communities develop knowledge and learn over time.

There is already a substantial amount of literature on using AI to analyze organizations and social systems. Yet there has been comparatively little reflection on how some of the ideas behind modern machine learning might illuminate questions of institutional learning, management, and community development. From a Bahá’í perspective, this is especially intriguing because certain features of the Bahá’í administrative order appear, at least in an analogous way, to resemble concepts that have become central in modern AI: gradient descent, skip connections, and probabilistic learning. The layered structure of local, regional, national, and international institutions, together with the institution of the Counsellors and their Auxiliary Boards, even suggests parallels to the layered architectures and nonlocal connections found in neural networks.

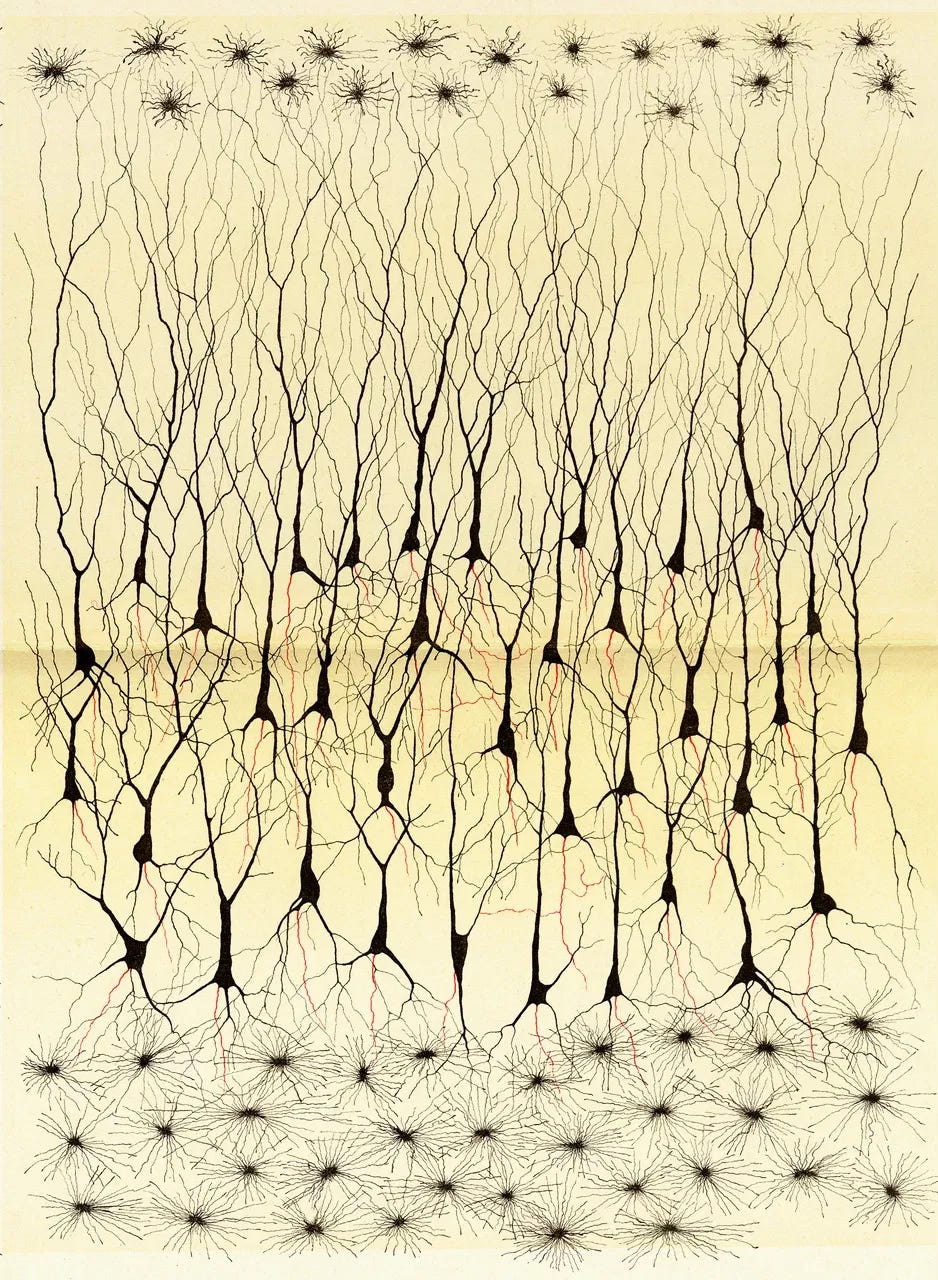

The development of machine learning itself began as an attempt to model biological cognition. In 1943, neurophysiologist Warren McCulloch and mathematician Walter Pitts proposed one of the earliest neural-network models as an electrical circuit meant to approximate how neurons might function. In 1949, Donald Hebb advanced the influential idea that neural pathways are strengthened through repeated use, often summarized as “neurons that fire together wire together.” In 1951, Marvin Minsky and Dean Edmonds built SNARC, an early hardware realization of a neural network. Using vacuum tubes and electromechanical components, it simulated a rudimentary learning process somewhat analogous to a rat learning to navigate a maze through reinforcement.

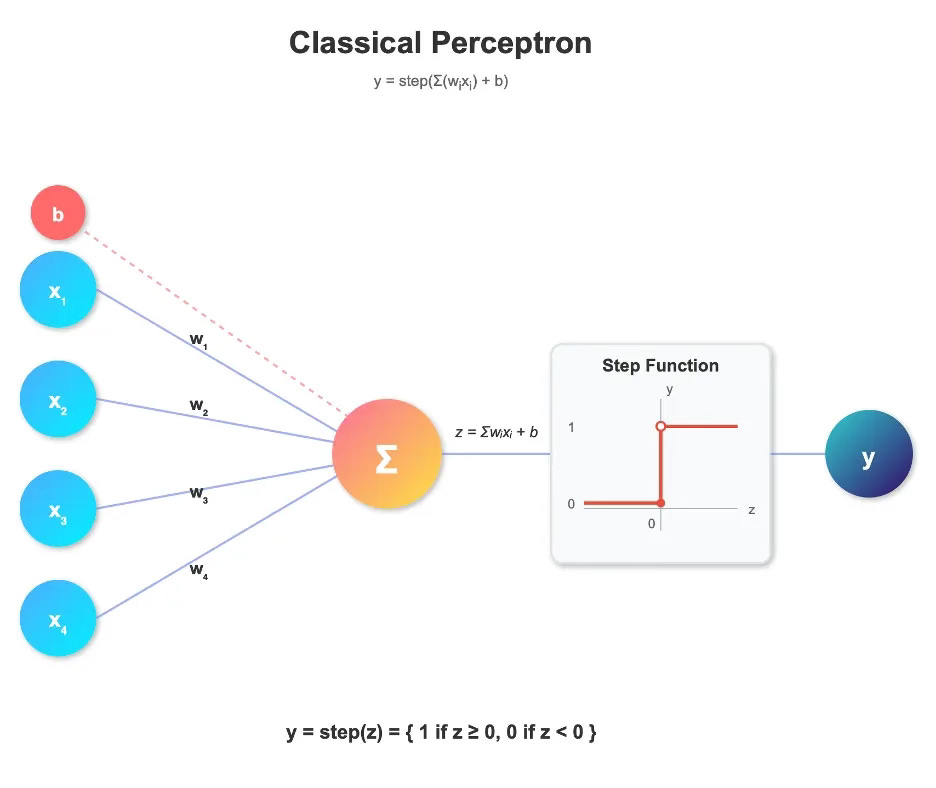

A major advance came in 1957, when Frank Rosenblatt invented the perceptron. The perceptron received multiple input values, multiplied them by weights reflecting their relative importance, summed them, added a bias term, and then passed the result through a threshold function to produce an output. If the total signal exceeded a certain threshold, the system produced one result; if not, it produced another.

The learning process then compared that output to the desired one. If the result was wrong, the system adjusted the weights and bias according to a simple rule. In this way, the perceptron could learn certain kinds of pattern recognition, and Rosenblatt promoted it as a major step toward artificial intelligence. For a time, enthusiasm was high.

However, later work showed that a single-layer perceptron had severe limitations. Because it was fundamentally linear, it could only separate data using straight-line boundaries. It could handle simple logical functions such as AND and OR, but not XOR. This limitation became famous after the publication of Perceptrons in 1969, which helped cool optimism about early neural networks.

The way beyond this limitation was to use multiple layers of perceptrons, each feeding into the next. Multi-layer networks made it possible to represent much more complex relationships in data. Over time, researchers came to see that one of the great strengths of such networks was not merely that they fit outputs, but that they learned increasingly useful internal representations of the input itself. In this sense, neural networks did not simply fit coefficients to a fixed mathematical basis chosen in advance; they learned, through training, how to represent the relevant structure of the data. This is one reason they proved useful in areas such as adaptive filtering in telecommunications and, later, handwriting recognition in banking and postal systems.

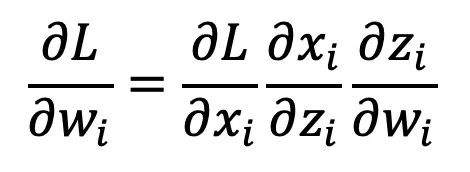

A particularly powerful method for training such networks was backpropagation. In 1986, David Rumelhart, Geoffrey Hinton, and Ronald Williams published their influential work showing how multi-layer networks could be trained effectively by propagating error information backward through the network. The mathematics relied on the chain rule from calculus: if one wants to know how a final output depends on an earlier parameter, one can determine this by multiplying together the sensitivities of each intermediate step. Applied to neural networks, this meant that one could calculate how each weight in each layer contributed to the final error and then adjust the system accordingly.

Although the chain rule itself had been known for centuries, its application to neural networks was complicated by the fact that the original perceptron used a sharp threshold function, which is not differentiable. The crucial insight was that this could be replaced by smoother activation functions, such as the sigmoid, which approximated the threshold behavior while allowing derivatives to be calculated. Interestingly, versions of backpropagation had been discovered, forgotten, or neglected more than once before becoming widely adopted. Even that history may suggest something important about how institutions learn, forget, and recover valuable ideas.

The next major breakthrough came in the mid-2000s, when Geoffrey Hinton and others demonstrated the power of deeper architectures, including deep belief networks. These systems emphasized not only classification but also learning the probabilistic structure of the input data itself. They did so through models such as restricted Boltzmann machines, which attempted to reproduce the statistical distribution of their inputs. Later developments in generative modeling, including variational autoencoders and diffusion models, extended this general ambition: not merely to produce correct outputs, but to learn the structure of the world from which the inputs arise.

Several of these ideas may be suggestive for anyone interested in how institutions and communities learn.

The first is the simple but powerful logic of gradient descent and backpropagation. In machine learning, the system compares its output to a desired goal, measures the error, and then determines how changes in different parts of the network would reduce that error. In a human institution, the analogue would be a process in which outcomes are compared to collective aims, and reflection is undertaken at every level to ask: what changes can we make that would improve the functioning of the whole?

This, however, immediately raises the problem of attribution. In a neural network, the causal relations among nodes are mathematically specified, and the contribution of each parameter to the output can, at least in principle, be computed. In human institutions, these relationships are much less clear. Information is incomplete, motives are mixed, and lines of influence are often obscured. Moreover, social and hierarchical power can distort learning by introducing incentives to hide failure, redirect blame, or protect status. Whenever the goals of individuals or subgroups become misaligned with the goals of the whole, institutional learning is impaired.

Here, the Bahá’í principle of consultation offers a strikingly different model. This principle was established by Bahá’u’lláh, the prophet-founder of the Bahá’í faith, and explained by ‘Abdu’l-Bahá, the son and appointed interpreter of Bahá’í scripture. Shoghi Effendi, the Guardian of the Bahá’í faith, built it into the functioning of the Bahá’í Administrative Order and explained how institutions should actually apply it. Consultation seeks to reduce the distorting effects of ego and power in collective decision-making and channel the divine inspiration. In true consultation, ideas are detached from personal ownership and evaluated on their merits. The aim is not victory, self-assertion, or the defense of factional positions, but the discovery of truth and the advancement of the common good. Because the process does not reward or punish participants on the basis of whose idea “wins,” it lowers the degree to which learning is corrupted by personal interest.

Equally important, once a decision has been made, it is supported by the group as a whole until a later cycle of reflection or consultation indicates that it should be revised. This creates a social environment in which the actual consequences of a decision can be more clearly observed, rather than being immediately obscured by partisan resistance, sabotage, or attempts at vindication. In that sense, consultation may be understood as a mechanism for improving the fidelity of institutional learning.

While elaborating on the principles of consultation, Shoghi Effendi explained:

The prime requisites for them that take counsel together are purity of motive, radiance of spirit, detachment from all else save God, attraction to His Divine Fragrances, humility and lowliness amongst His loved ones, patience and long-suffering in difficulties and servitude to His exalted Threshold. Should they be graciously aided to acquire these attributes, victory from the unseen Kingdom of Bahá shall be vouchsafed to them…. The members thereof must take counsel together in such wise that no occasion for ill-feeling or discord may arise. This can be attained when every member expresseth with absolute freedom his own opinion and setteth forth his argument. Should any one oppose, he must on no account feel hurt for not until matters are fully discussed can the right way be revealed. The shining spark of truth cometh forth only after the clash of differing opinions. If after discussion a decision be carried unanimously, well and good; but if, the Lord forbid, differences of opinion should arise, a majority of voices must prevail.

(Consultation: A Compilation) www.bahai.org/r/644110934

A second useful analogy comes from the idea of skip connections in deep neural networks. These were introduced to help address problems such as the vanishing gradient, in which error signals become too weak as they pass through many layers, making training increasingly difficult. Skip connections allow information to bypass intermediate layers, preserving signal quality and improving learning in very deep systems.

In institutions, one can imagine analogous arrangements: channels that connect higher levels of leadership more directly with local realities, without requiring every signal to be filtered through a purely hierarchical chain. Such channels can reduce distortion, preserve responsiveness, and help the system remain sensitive to conditions on the ground.

Within the Bahá’í administrative order, something like this can be seen in the institution of the Counsellors and their Auxiliary Boards. Without displacing the authority of elected bodies, they provide channels of encouragement, insight, and communication that cut across formal administrative layers. Their role helps maintain a more direct connection between local conditions and broader levels of guidance and learning. In this sense, they resemble institutional “skip connections” that preserve signal strength across a large and distributed system.

A third lesson from AI concerns probabilistic learning: the need for a system not only to optimize its outputs but also to develop an increasingly accurate model of the environment in which it operates. In machine learning, this often means learning the probability distribution of the inputs, identifying which variables matter most, how they vary, and what structure underlies the observed data. Such a system does not simply react; it learns to understand the world to which it must respond.

In institutional life, the analogue would be an ever-deepening awareness of the surrounding environment: the social conditions, human capacities, cultural realities, constraints, opportunities, and patterns of change that shape action. For a company, this might include raw materials, labor conditions, customer behavior, or market volatility. For a community, it includes the spiritual, social, and material conditions that influence growth and service.

In the Bahá’í context, one prominent expression of this kind of learning can be found in the institute process and in the broader pattern of action, reflection, consultation, and study that now characterizes Bahá’í community development. The institute process is not simply a curriculum of classes. It is part of a larger mode of collective learning in which individuals and communities study, act, reflect on experience, and then return to action with greater insight. Knowledge is generated locally, shared across levels of the community, and progressively refined. What emerges is not merely the execution of a plan, but the gradual development of a community capable of understanding its environment and responding to it with increasing wisdom, unity, and effectiveness.

Of course, such comparisons have limits. Human communities are not neural networks, and spiritual civilization cannot be reduced to a computational model. Human beings possess moral agency, consciousness, and spiritual aspiration in ways that no machine does. Still, analogies can be illuminating. They can help us recognize patterns of learning, feedback, representation, and coordination that might otherwise remain obscure.

Perhaps one of the most interesting lessons from AI is not that communities should become more machine-like, but that the science of learning itself, when examined carefully, can shed unexpected light on the wisdom already embedded in systems of collective life. From a Bahá’í point of view, it is striking that principles such as consultation, nonpartisanship, layered institutions, and cycles of action and reflection seem not only spiritually sound but also deeply consistent with what one might expect from any system genuinely oriented toward learning and development.